Ask A Genius 60 – Consciousness 1

Scott Douglas Jacobsen and Rick Rosner

January 16, 2017

Scott: What about the lexical definition, in your terminology, of consciousness or the descriptive unavoidability of consciousness?

Rick: Let’s start with what I think or what we think consciousness is, which is the broadband, real-time sharing of mutually understood information among specialist sub-systems in an information processing system with each sub-system having the same approximate information under consideration.

That is, a bunch of sensory information is flowing into a system. At the same time, the system is generating a lot of processed information from the sensory information coming into and from the analytic information streaming out of each of the specialist sub-systems with each of the specialist sub-systems having the same approximate mass of external and internal information under consideration.

That is, look at us humans with our sensory bulbs on top of our necks, we’re taking in all of this information, then we’re thinking about all of this information with specialist sub-systems sharpening and compressing the information in ways that are what each sub-system has been designed for (evolved for), or is best at.

Same mass of information. 300 different sub-systems. Each spewing its own information giving its own interpretation of that information. Reasonable?

Scott: Yes, but leads to a question about time. The question about time is about another information processing system, which processes 100 times faster than us. If it looked at us processing information in its real-time, our information processing would seem much more disparate.

So, could we consider our information processing node sets inside our skulls integrated sufficiently to be called “conscious”?

Rick: I’ve got two answers for that. At some point, in arguing for consciousness – there’s an argument to be made, if it feels like consciousness to the conscious being, and if its mathematically consciousness-like based on the mathematics of consciousness that we don’t have yet, then it’s consciousness. That’s answer one, which is a bit circular. It is consciousness according to a mathematical definition of consciousness we don’t have yet.

Scott: It’s qualitative, not quantitative.

Rick: Yea, but that’s not good enough because you could build a computer that announces it’s conscious 24/7. It could announce it, “I’m conscious. I’m hot. I don’t like that. It’s warm here, and cold there.” It could make all sorts of conscious-sounding noise without actually being conscious. You need the mathematical aspect of consciousness. That includes enough different specialist sub-systems contributing to the cross-chat.

Chris, a buddy of mine, and I were talking about a Go playing computer being conscious. I argued that “not very likely” because of the tasks involved in being a Go playing computer. Unless, the Go playing computer had 300 different specialist sub-systems. Each with its own angle on what it sees on the Go table, on the Go board.

Each of those sub-systems contributing to an overall really richly painted vision of the situation on the Go board. Even then, it is pretty tough to get consciousness out of that because if you’re limited to the Go board, like 16×16 or 24×24, then it’s limited in terms of information, so you need to self-generate all sorts of internal information about the external information.

Even so, it is pretty limited. It leads to something pretty narrow. The multiplicity of information isn’t there. You could design a Go playing computer once we understand the mechanisms of consciousness that has a profound knowledge and sentiment about every situation on the Go board, but it’s still a pretty crappy form of consciousness.

Scott: In a way, it’s a ladder. In another way, things build up pretty quick. Things, as you note in an older analogy…

Rick: …the wheel…

Scott: …yea, the wheel. Things become more rounded as you add more sides. Things become pretty rounded once you add only a few sides.

Rick: After you get to an octagon, you could imagine a car with octagonal wheels. It is a rough ride, but it would move. 10 is good. 16 is better. Once you get to a 40-sided wheel, that’s not a terrible ride. The difference in quality of ride for practical purposes between a 100-sided and a 200-sided regular polygon is negligible. Things reach approximate circularity.

The curve of approaching something reasonably defined as consciousness. It is a pretty steep curve. You don’t have to do much work once you start to create something that is conscious.

Scott: I could easily see analytic programs designed to take into all accounts of the algorithms that a particular software program has, and then present a literal wheel, as a metaphor, to show how conscious a system is, to a human operator for easy interpretation.

Rick: That would be good. There are probably 20 better analogies that we don’t know yet, but for right now that is a good one. I don’t know whether, if I would find myself in another consciousness, I’d prefer being in a grasshopper or the Go computer. I’d probably prefer the grasshopper, but if you went to earthworm then I would prefer Go computer. Ants, I don’t know.

Ants, maybe, some part of their mental sophistication, to the extent that they have any – like 10% of it, is based on the pheromone system that links ants together. That Go computer consciousness. It is a narrow space to be conscious in.

We’ve got a fairly rough, but workwithable, idea of what are some of the necessary ingredients and functions of consciousness. That cumbersome definition that we’re talking about can be worked with to some extent.

Given that background, it can take us to a sentence-based, sloppy proof of the necessity of consciousness being – my buddy Chris calls it – an epiphenomenon, meaning – I’d go with epiphenomenon – something you get that goes along with the things you need. It is not needed, but arises as the result of a certain type of information processing.

Scott: It is an emotional and cognitive spandrel. Like your bellybutton, it’s almost just kind of there.

Rick: Yea, but I would argue it is more than almost just there. In fact, my buddy Chris, who I keep bringing up because I just had a many hours-long conversation with him about this stuff, brings up the example of the appendix. It has been for more than 100 years the stereotypical not-needed organ in the body. It doesn’t do anything, like men’s nipples.

Now, it turns out that the appendix may be a place for good bacteria to hang out, if you happen to wipe out the good bacteria in your intestines. A crazy amount of the cells in your body are bacteria. If they become out of whack, it makes digesting problematic and your appendix might just be a reference library to keep things in track if something bad happens to your intestinal bacteria.

Within your awareness, you could describe, which would take a gazillion sentences per second in a given moment, things in your awareness with sentences. You could summarize the knowledge in your moment-to-moment awareness with a 1,000 sentences a second. It would describe the room you’re in, which is mostly visual information. So, visual awareness described by sentences. Also, things in your imagination.

Feel information, how each part of your body feels, emotional information, taste information, and sensory information in general described in hundreds of sentences. Sentences to describe things in imagination after saying, “Tetrahedron.” You then have an image of a tetrahedron in your head. Same with “elephant.” Then you think of an elephant and have a representation of an elephant, and how you feel about your situation at the time, and how you feel about yourself and your body itself feels.

Say you’re limited to a 1,000 sentences per second, which isn’t unreasonable, somebody like a Marcel Proust of the Computer Age could write 1,000 sentences to describe, not all-encompassing descriptions, but a pretty decent summarization of your awareness. Some of those sentences, as we’ve just listed, are sentences associated with being conscious.

I would argue the feel of consciousness is itself consciousness, and is this global busybodiness of every sub-system being roughly aware of what every other sub-system is doing and being aware of this massive gob of information they share. This massive information, broadband crosstalk – at least in evolved organisms whose job it is to survive long to reproduce and raise offspring and, thus, has a self-interest.

You can imagine a conscious entity. Engineered entities without this self-interest. However, almost all conscious entities are interested in perpetuating itself, in perpetuating the species. So, you have all of this self-monitoring stuff that is, sentences that are, indicative of human consciousness, creature consciousness.

That is, they reflect your emotions about your situation. At its most basic level, your chances to live and reproduce. You have a bunch of trivial concerns, which, if you trace them back, they are traceable back to something survival-related. You have all of these sentences. It needs to be refined as an argument.

However, you have these sentences strongly indicative and reflective of consciousness. Both the mathematical form of consciousness, which is this shared gossip or information held in common, and the human form of consciousness that we are more comfortable talking about, e.g. how we feel, emotions and such.

You have these descriptive sentences that describe external sensory information, e.g. score in a football game, whether a light is green or red. Some sentences describe internal information. Some sentences indicate consciousness, which are basically the same as one another. They are based on information held in your consciousness or in your brain.

You have sentences. All of the sentences descriptive of the information in your brain are by definition reflective of the information in your brain. They are coming from the same thing. They come from your mental agglomeration of information. They are not qualitatively different from any other descriptive sentence that describes the contents of your awareness.

From there, you can describe the sentences that reflect consciousness as valid and unavoidable as the sentences that are more apparently concrete because they describe definite sensory information. There’s a sloppy proof there.

Author(s)

Scott Douglas Jacobsen

Editor-in-Chief, In-Sight Publishing

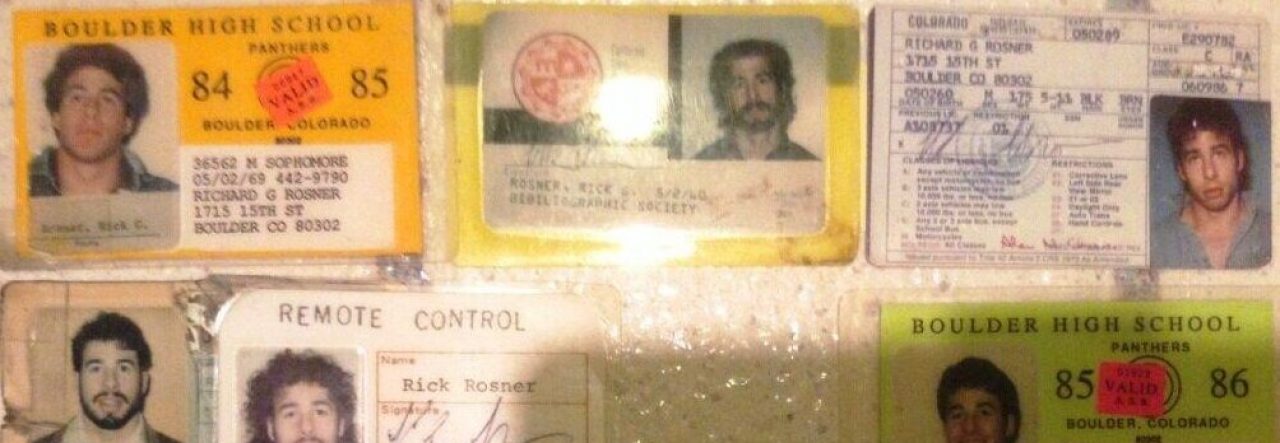

Rick Rosner

American Television Writer

License and Copyright

License

In-Sight Publishing and In-Sight: Independent Interview-Based Journal by Scott Douglas Jacobsen is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

Based on a work at www.in-sightjournal.com and www.rickrosner.org.

Copyright

© Scott Douglas Jacobsen, Rick Rosner, and In-Sight Publishing and In-Sight: Independent Interview-Based Journal 2012-2017. Unauthorized use and/or duplication of this material without express and written permission from this site’s author and/or owner is strictly prohibited. Excerpts and links may be used, provided that full and clear credit is given to Scott Douglas Jacobsen, Rick Rosner, and In-Sight Publishing and In-Sight: Independent Interview-Based Journal with appropriate and specific direction to the original content.